Last Updated on 30. April 2026

With modern agentic development systems such as Anthropic’s Claude Code and comparable AI engineering platforms, the software industry has reached a point where substantial parts of the traditional development cycle can be massively accelerated. In professional development environments, it is now realistic to produce the implementation scope of a traditional two-week sprint within just a few hours—including large portions of the associated test artifacts. Yet this new speed is leading to a fundamental misinterpretation in many organizations: Faster code does not automatically mean better software.

Every experienced software engineer, architect, or quality engineer knows that speed does not automatically correlate with maintainability, scalability, robustness, or sustainability. On the contrary—without structural adaptation of the Software Development Lifecycle (SDLC), agentic coding can lead to quality degradation, integration chaos, and organizational dysfunction. Many teams are already observing early symptoms: QA is perceived as an alleged bottleneck, merge conflicts and integration issues are increasing significantly, architecture is diverging more rapidly, technical debt is growing despite higher development velocity, and functional inconsistencies are accumulating.

The common conclusion is: “Our quality assurance can no longer keep up with the pace of development.” But in many cases, the root cause is not inefficient quality assurance—rather, existing delivery and quality processes simply weren’t designed for the new development velocity. Organizations that want to leverage agentic coding professionally must accept that the Software Development Lifecycle in the age of agentic development needs to be redefined.

Agentic Coding Changes More Than Implementation—It Transforms the Entire Engineering Model

The biggest misconception in current discussions is reducing agentic coding to mere code generation. Professional agentic coding in an enterprise context has nothing in common with so-called “vibe coding”—the practice of generating code through unstructured prompts and using the result without systematic validation. Rather, it is a structured engineering approach with clearly defined quality and governance mechanisms.

Professional agentic coding specifically means:

- Deterministic and traceable engineering processes

- Structured specification chains

- Quality-assured artifact production

- Controlled context handoffs

- Systematic validation at every level of the SDLC

This fundamentally shifts the bottleneck in software development: Implementation is no longer the primary cost and time driver—engineering quality is. The central question going forward is no longer: “How fast can we write code?” but rather: “How do we ensure that machine-generated artifacts are correct, maintainable, architecture-compliant, and evolvable over the long term?”

Why Traditional Agile Delivery Models Hit Their Limits Under Agentic Coding

Many agile teams today implicitly operate with an incremental interpretation of traditional development models.

Even when Scrum or Kanban is used, teams within a sprint effectively cycle through recurring engineering activities that correspond to classical development phases—albeit iteratively, overlapping, and not strictly sequential. These include in particular:

- Requirements analysis

- Specification

- Architecture and design decisions

- Implementation

- Reviews (e.g., architecture, design, and code reviews)

- Verification and validation

Reviews are a distinct engineering step, as they do not primarily verify functional correctness but rather ensure quality on other levels—particularly architecture conformity, maintainability, security aspects, comprehensibility, and adherence to technical standards.

In the age of agentic development, it becomes clear: these phases must be explicitly and quality-controlled. When technical implementation loses significant effort relative to specification, validation, and quality assurance, the quality lever shifts entirely to the upstream phases. This is where the V-Model provides a surprisingly modern foundation.

Why the V-Model Becomes More Relevant Than Ever in the Agentic Era

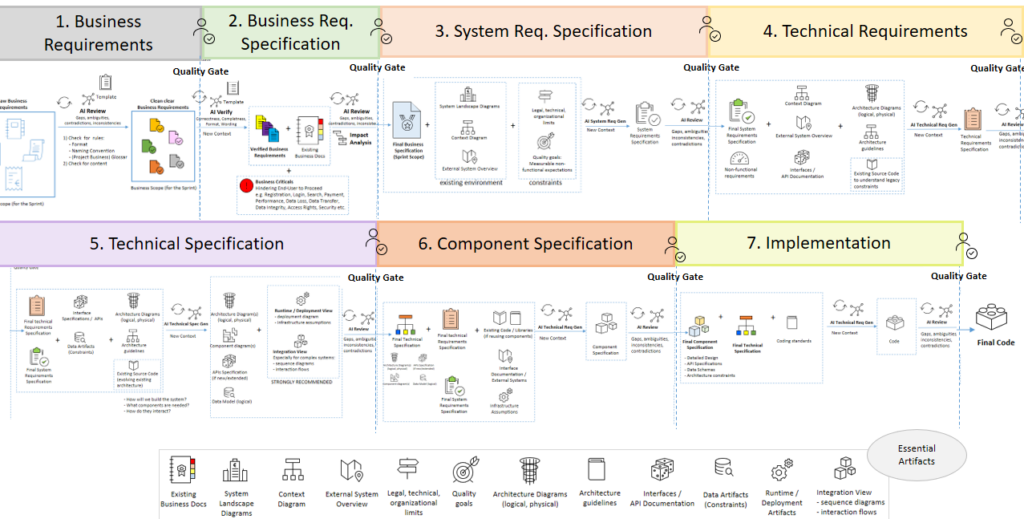

The classic V-Model structures development into seven progressive levels: (1) Business Requirements, (2) Business Specification, (3) System Requirements, (4) Technical Requirements, (5) Technical Specification, (6) Component Specification, and (7) Implementation.

Historically, the V-Model was often criticized as too heavyweight. Under agentic coding, however, a new reality emerges: The more precise and structured the upstream phases are, the higher the quality of the machine-generated output. Agentic systems deliver better results the more precise and structured their inputs and context foundations are.

This means the V-Model does not become a bureaucratic relic but rather the ideal foundation for deterministic AI governance, phase-based quality gates, formalized context handoffs, measurable specification quality, and reproducible development processes.

Source Code Alone Is No Longer a Sufficient Source of Truth

A key insight from agentic software development is: Source code alone is no longer sufficient as a knowledge base for sustainable software development. AI systems only deliver high-quality results when their context is complete, consistent, and structured.

For sustainable quality, professional agentic development additionally requires at least the following artifact classes:

System Landscape & Context Artifacts

Document system boundaries, business domains, integration partners, responsibilities, and system contexts.

Architecture Artifacts

Architecture principles, ADRs, patterns, layering, quality attributes, and constraints.

Interface Artifacts

API specifications, event contracts, message schemas, and consumer/provider agreements.

Data Artifacts

Domain models, data flows, schemas, validation rules, and ownership definitions.

Runtime & Deployment Artifacts

Deployment models, infrastructure topologies, security/runtime constraints, and observability requirements.

These artifacts are essential to ensure that AI-generated solutions remain systemically consistent, architecture-compliant, and operationally viable. In the full visualization of the agentic V-Model (Section 7), they are shown as “Essential Artifacts.”

The Agentic V-Model: Quality Through AI-Driven Quality Gates

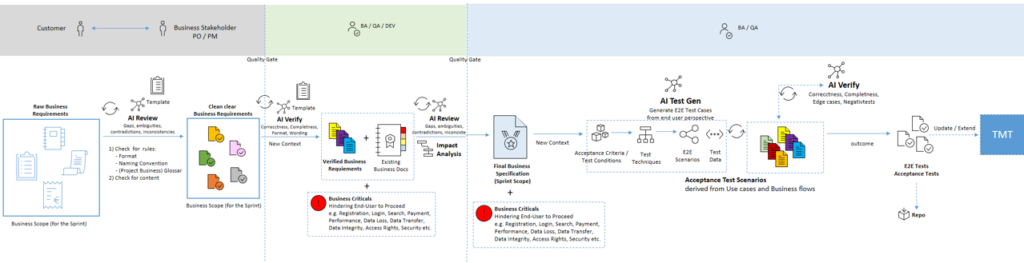

In the professional agentic SDLC, every phase of the V-Model becomes a quality-assured handoff point. The following illustration shows the process exemplarily for the Business Requirement and Business Specification phases:

Business Requirement Phase

Raw requirements first undergo an AI-supported review that systematically checks for functional gaps, inconsistencies, contradictions, glossary and terminology violations, naming and formatting violations, and ambiguous language (see illustration). Only after multiple review iterations does a requirement pass the first quality gate.

Business Specification Phase

Based on validated requirements, existing business documentation, and maintained documentation of critical business processes, an agentic system generates a structured impact analysis: Which processes are affected? Which constraints apply? What risks arise? What side effects need to be considered?

Building on this, a Business Specification is created that encompasses use cases, user journeys, process models (BPMN/process flows), business rules, and functional scenarios with associated acceptance criteria.

Early Test Derivation as an Engineering Principle

Already at the business level, acceptance test scenarios are derived: decomposition into test conditions, risk-based test case identification, application of established test design techniques, and structuring according to standardized test principles. Testability is established before implementation—not after.

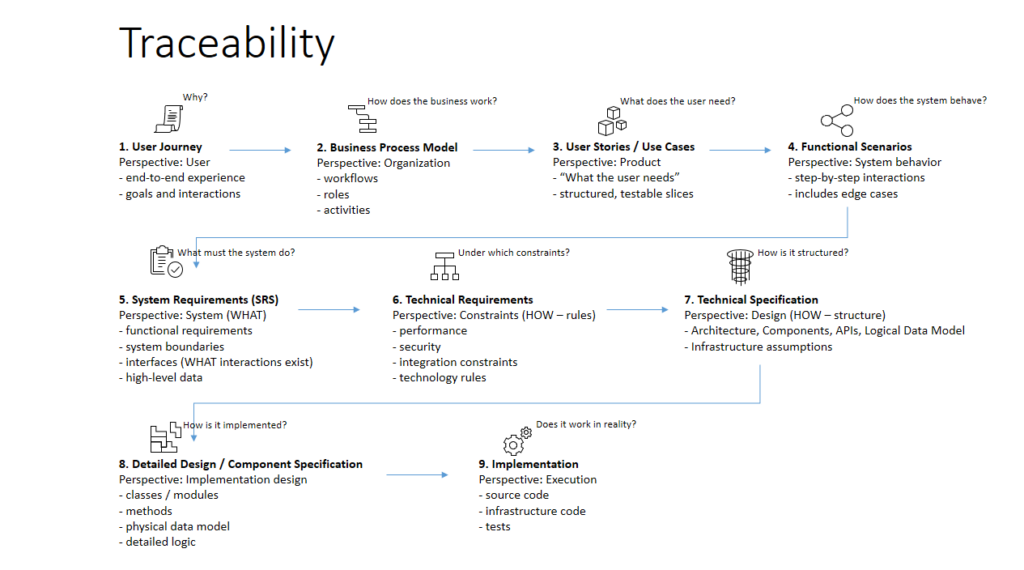

Traceability as a Control Mechanism of the Agentic SDLC

With increasing automation of the SDLC, traceability evolves from a traditional governance requirement into an operational control mechanism for the entire engineering process. In the agentic SDLC, it forms the foundation for deterministic AI orchestration, precise impact analyses, controlled artifact generation, and consistent change propagation. An agentically orchestrated SDLC is only manageable when end-to-end traceability exists across all engineering artifacts. The following illustration shows this chain of effect across all engineering levels:

End-to-end traceability ensures that every implementation can be traced back to validated requirements, technical decisions can be justified on a business level, test activities remain systematically derivable, and changes are propagated consistently across all artifact levels. In regulated domains, it also enables reliable audit and compliance evidence at any time.

Without end-to-end traceability, agentic coding quickly produces functioning code but without reliable integration into business context, system context, and overall architectural structure. The result is inconsistencies, architecture drift, and technical debt that compound as automation increases. Particularly in regulated and complex enterprise domains, traceability is therefore not optional documentation but a fundamental prerequisite for professional, controlled, and auditable agentic software development.

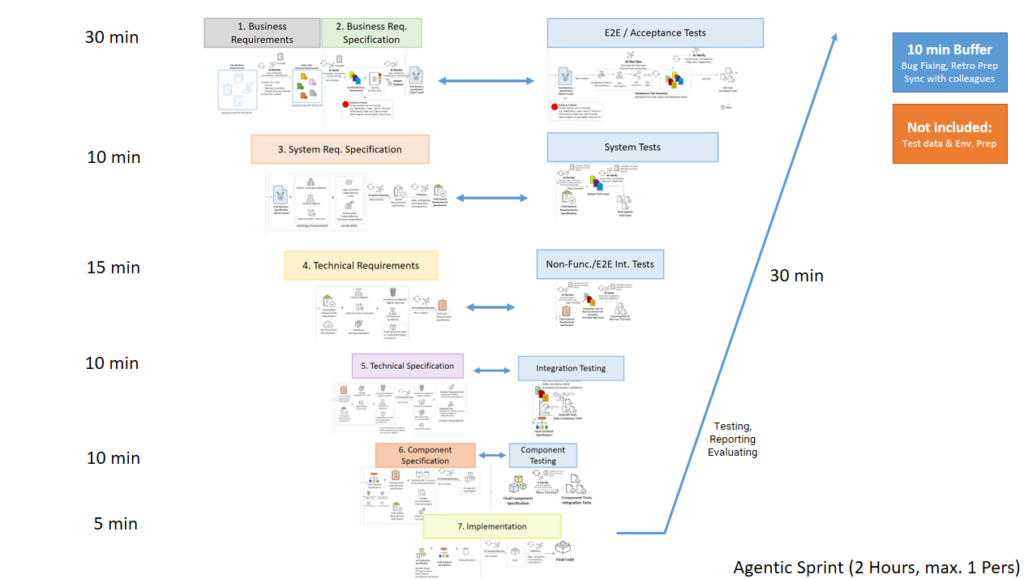

When the V-Model Is Agentically Orchestrated, Delivery Shrinks from Weeks to Hours

Once the agentic V-Model is fully operationalized, the operational throughput of clearly scoped sprint increments can be reduced from days or weeks to just a few hours. The following illustration shows the complete model with all seven phases, associated quality gates, and essential artifacts as the knowledge foundation:

An exemplary workflow:

- Business Requirement & Business Specification: 30 min

- System Requirement: 10 min

- Technical Requirement: 15 min

- Technical Specification: 10 min

- Component Specification: 10 min

- Implementation: 5 min

- Testing / Reporting / Evaluation: 30 min

- Buffer for bug fixing / synchronization / retro prep: 10 min

New Organizational Implications

Sprint scoping must be rethought

In highly agentic delivery models, the optimal scoping of sprint work packages shifts toward smaller, clearly delineated, and more individually owned implementation units. Uncoordinated parallel agentic code production increases the risk of merge conflicts, uncoordinated architecture changes, specification inconsistencies, and integration instability. Larger initiatives should therefore be modularized into: parallelized module sprints, followed by dedicated integration sprints.

The New Professional Profile in Software Engineering

Agentic coding fundamentally shifts the value contribution within development teams.

The traditional separation between Business Analyst, Software Engineer, QA Engineer, and Architect is increasingly blurring.

A new target profile is emerging: the AI-Augmented Systems Engineer—a professional with combined expertise in requirements engineering, software architecture, quality engineering, test design, systems thinking, and AI orchestration. Their core value contribution lies not primarily in writing code, but in defining high-quality specifications, evaluating architectural implications, validating machine-generated artifacts, orchestrating complex AI workflows, and ensuring systemic quality.

Boundaries and Prerequisites of Agentic Development

Agentic coding only realizes its benefits under certain prerequisites: high maturity of existing engineering artifacts, disciplined architecture and documentation maintenance, standardized delivery processes, high competency of the engineers in charge, and a suitable toolchain and governance framework.

These prerequisites align with principles that mgm has pursued for over two decades in the development of mission-critical systems: structured architecture, standardized processes, and high engineering discipline. Without these prerequisites, agentic coding cannot compensate for existing organizational and technical deficits—rather, it frequently amplifies them at an accelerated pace.

Conclusion

Agentic coding is not merely an acceleration of traditional development. It is a paradigm shift for all of software engineering. Organizations that simply layer agentic coding on top of existing delivery models will achieve more output in the short term—but will generate significant quality and integration problems in the medium term.

Organizations, however, that rethink their SDLC, establish quality gates, and understand engineering as an orchestrated specification, validation, and governance process can achieve for the first time: drastically higher delivery velocity at the same or higher quality, systematic traceability, and scalable enterprise development with AI.

Sustainable competitive advantage in the age of agentic development does not come from individual implementation speed, but from the quality, governability, and scalability of the underlying engineering system. Organizations that shape this transformation early will benefit significantly.