Last Updated on 11. December 2023

In software development, pivotal moments arise as the testing phase approaches. The countdown begins, all eyes are on your QA team. Time and resources are scarce, while the number of test cases and activities escalates. It’s akin to a delicate balancing act on a narrow ledge, becoming increasingly challenging with the rising wind. Undetected errors may later burgeon into a storm of issues. How do you navigate this tightrope and ensure your software is delivered with utmost quality while maintaining composure in this critical phase?

In the first part of the article series, we discussed significant challenges that can arise in quality assurance. In this second part, we explore how, through the right strategy, intelligent resource utilization, and a trained focus on the essentials, you can minimize risk in testing phases and maximize software quality – gaining strategic superiority.

Table of Contents

- Understanding your end users’ browser preferences

- Randomized Browsers Instead of Cross-Browser

- Number of Operating Systems Multiplies Test Execution Time

- Comprehensive overview

- Dancing with Bugs: Prioritization

- Test Case Tetris: Efficient management of growing numbers of test cases

- Test Case Prioritization

- Sampling Approach

- Old and unchanged

- New or changed features

- The Happy Path

- Where there’s smoke, there’s usually fire

- Efficient test case selection

- Third-Party Software

- Backdoor for QA

- Is there a more effective alternative?

- Summary

The testing strategy must be adaptable and flexible to respond to unforeseen circumstances. Even a well-prepared QA team can face unexpected challenges, be it hardware issues, delayed implementation of features, or faulty implementation of critical requirements. The ability to strategically act in such moments and dynamically adjust the testing strategy can make the difference between success and failure.

Efficiency in quality assurance is heightened by consciously and frugally managing resources while simultaneously aiming for good quality. It is crucial to consistently weigh effort and benefit in your QA decisions. In the following sections, I will present various approaches that can help you achieve strategic superiority in quality assurance.

Understanding Your End Users’ Browser Preferences

In the realm of quality assurance, time is often a precious commodity. Test execution time can exponentially increase when testing your web application on all available browsers. However, taking this labor-intensive path is not always necessary, especially when operating within limited time and resources. One key tactic is to focus on what truly matters. Begin by understanding your end users’ preferences. Which browsers do they use most frequently?

Imagine that 95 percent of your users prefer Chrome, while 4 percent use Edge and only 1 percent opt for Firefox. An issue affecting Firefox may be inconvenient but impacts only a small user group. In case of necessity, these users could easily switch to Chrome or Edge without significant effort. A Chrome related issue, on the other hand, would be more serious and likely require immediate attention. Here, the priority becomes evident—you want to ensure the majority of users have a seamless experience. It’s unrealistic to expect them to switch to another browser to circumvent the issue.

By concentrating on the most essential browsers, you conserve valuable resources and time that can be utilized for other aspects of quality assurance. A strategic browser approach is, therefore, a crucial step toward achieving maximum quality with minimal effort.

Streamline your tests to the most widely used browsers, saving valuable resources and precious time.

Furthermore, it is advisable to instruct developers to prioritize testing with the most common browsers. Alternatively, they should consider conducting tests across different browsers to ensure broader test coverage. This approach ensures that development and optimization align with the needs of a wide user base.

Randomized Browsers Instead of Cross-Browser

Imagine your users evenly distribute among Chrome, Edge, and Firefox, and you need to conduct tests on all these browsers. This can significantly extend test execution time, a luxury you typically don’t have, especially during test reruns in the testing phase.

A proven method for automated (regression) tests is the use of randomized browsers. In this approach, a browser is randomly selected for each test rerun. While this may initially seem like a gamble, it has a distinct advantage: over the course of test reruns, virtually all browsers are covered. This means your tests provide a balanced coverage without requiring testing each browser in every execution.

An alternative method is to divide your tests by themes and test these themes with different browsers. For the next test run, change the browser selection for the themes. This ensures that each theme is thoroughly tested with all browsers over time. This approach allows for efficient coverage without extending test execution time.

The choice between these methods depends on the specific situation. However, the focus is on maximizing test coverage across different browsers without unduly extending test execution time. This way, you can optimize your test resources and still ensure your application functions smoothly on the most common browsers.

Number of Operating Systems Multiplies Test Execution Time

One of the challenges in software quality assurance is ensuring that your application functions seamlessly on various operating systems. Testing on Windows, macOS, and Ubuntu can significantly increase test execution time and tie up resources.

To master this balancing act among different operating systems, it’s important to initially understand which operating systems your users most commonly utilize. This allows you to concentrate your resources on the most frequently used platforms. In most cases, it makes sense to primarily test on the most widely used operating system as it covers the largest user base.

Once you’ve conducted your tests on the main operating system, consider extending a portion of your tests to the other operating systems on a representative basis. The emphasis should be on operating system-specific tests and functionalities. This way, you can reduce test execution time while ensuring your application functions across different platforms.

Another essential aspect is analyzing the errors found on an operating system. The number of discovered errors often provides insights into whether additional tests are needed on that platform. If an operating system exhibits a high number of errors, it may indicate the necessity for more intensive testing to identify and address potential issues.

It is also advisable to divide tests and rotate them across different operating systems instead of executing all tests on all platforms in every run. This allows you to utilize test resources more efficiently while still ensuring the robustness of your application across various operating systems.

Choosing the right strategy for selecting operating systems and organizing tests can help optimize test execution time while ensuring that your software functions seamlessly on the platforms most commonly used by your users.

Comprehensive overview

Imagine your QA team as an orchestra, where each member plays an instrument. For the music to resonate harmoniously and flawlessly, you need a conductor overseeing the entire ensemble. Similarly, achieving strategic superiority requires at least one member of your QA team to maintain a comprehensive overview of all aspects relevant to the quality assurance process. This includes not only the tests themselves but also the test environments, peculiarities of your software, and test coverage. The comprehensive overview aids in enhancing the quality of strategic decisions and utilizing resources more efficiently. Overall, the comprehensive overview plays a key role in the strategic alignment of software quality assurance. It enables your team to establish the right priorities, minimize risks, and ultimately deliver software of the highest quality.

The Dance with Bugs: Prioritization

Bug management is an essential process in QA. It involves capturing, monitoring, reporting, prioritizing, fixing, and verifying bugs, which are issues or problems in a software application. It’s important to understand that not every identified bug necessarily needs to be fixed, especially not immediately. Strategic evaluation and prioritization of bugs are crucial to efficiently utilize limited resources. This process requires a clear distinction between the severity and urgency of bugs to ensure effective bug management.

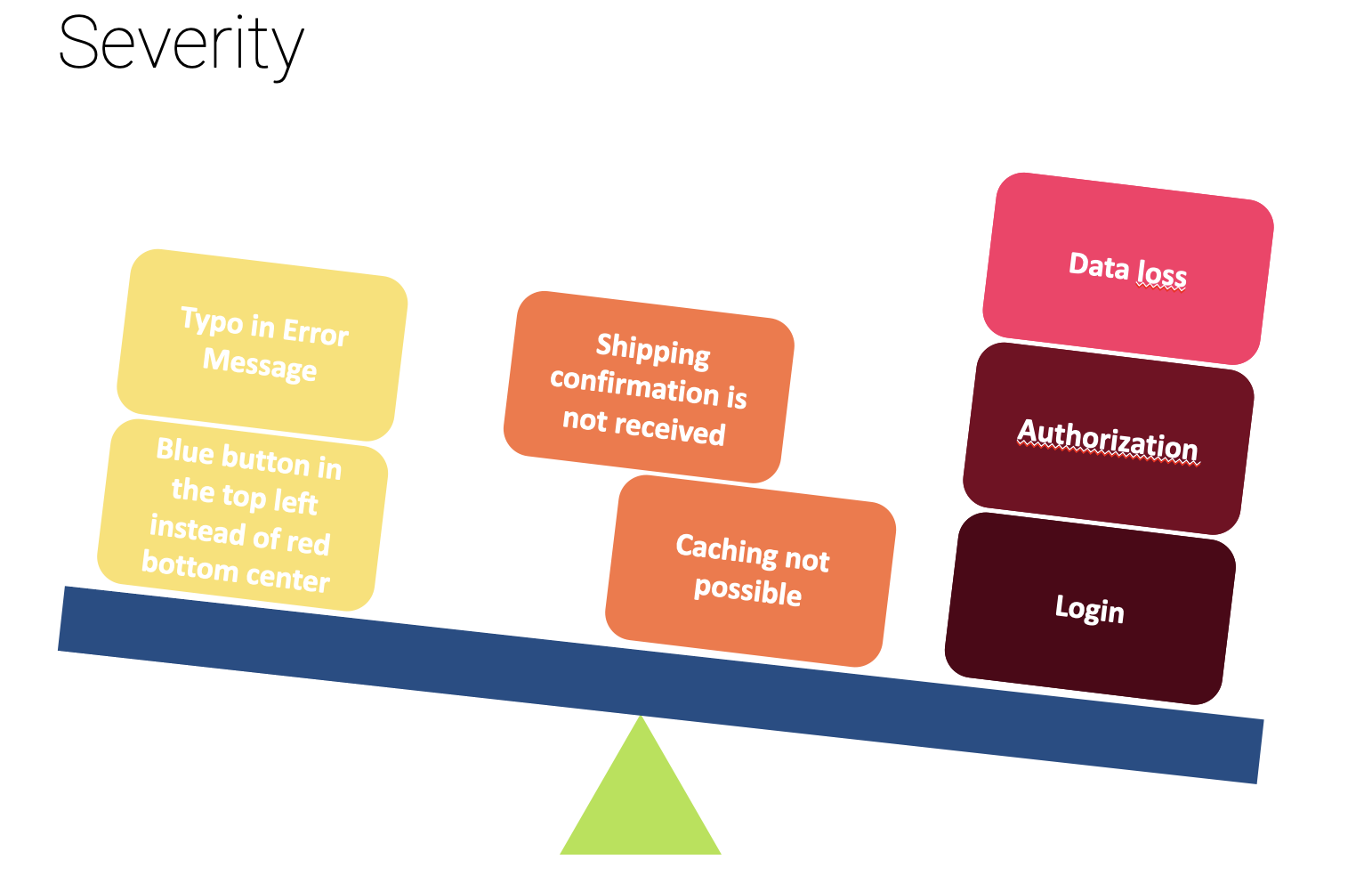

Severity: This criterion evaluates the impact of a bug on the application and users. Severity is typically categorized as follows:

- Critical: The application is non-functional or experiences data loss.

- High: A significant feature is impaired, but the application continues to function.

- Medium: A non-critical feature is affected.

- Low: Cosmetic error minimally impacting the user experience.

Example: An e-commerce shop has a bug where orders are randomly lost. This is a critical issue as it can lead to revenue loss and customer attrition.

Urgency: This criterion considers the timeframe within which a bug needs to be addressed. A bug can be urgent if it requires immediate attention, for example, to meet a critical deadline or customer expectation.

Example: Suppose the same e-commerce shop has a cosmetic bug where an image on the homepage is slightly misaligned. While not a severe impairment, if this bug occurs just before a significant sales event, its urgency may increase.

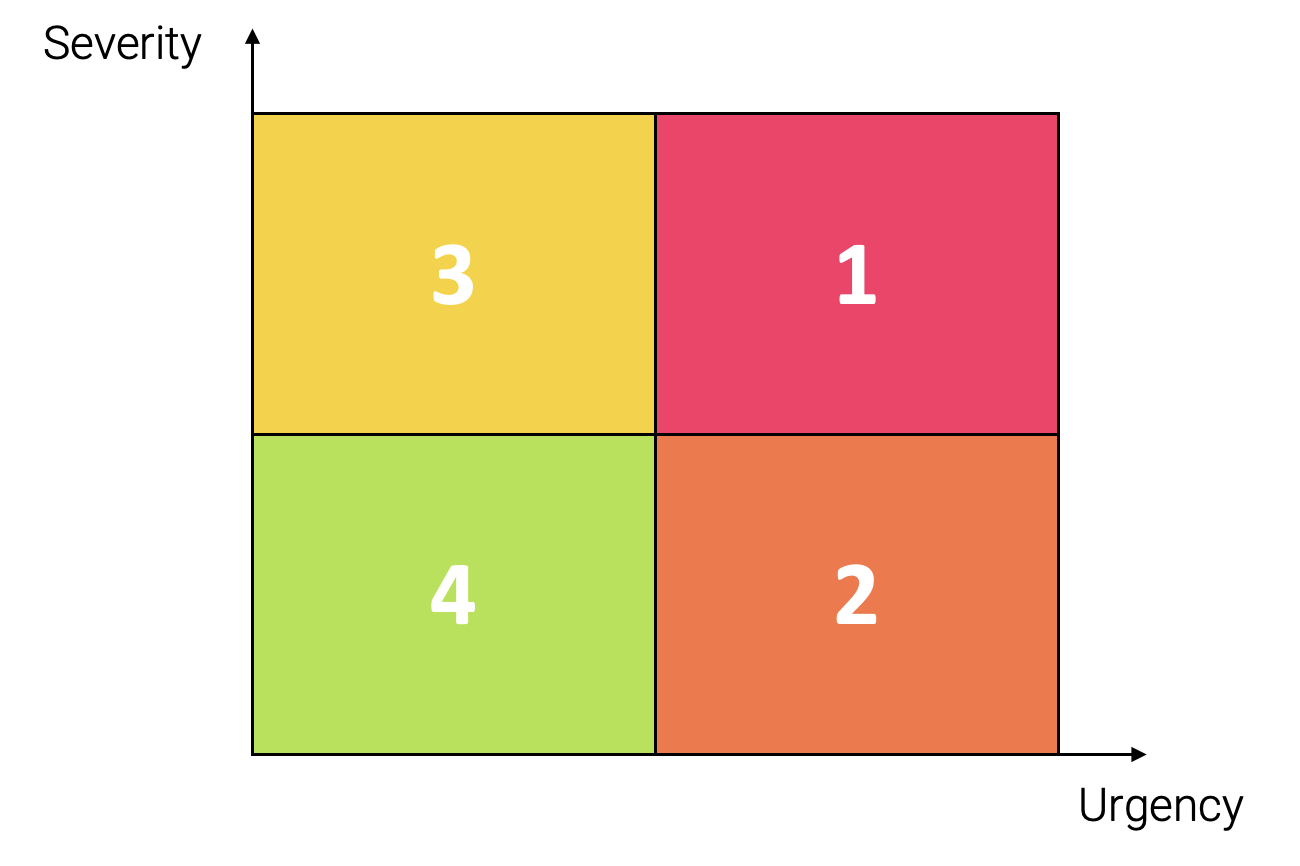

Distinguishing Severity and Urgency in Prioritization:

- A bug with high severity and high urgency should have top priority since it is both severe and requires swift resolution.

- A bug with low severity but high urgency might need to be addressed quickly, for example, to patch a security vulnerability, even if it doesn’t impact the application’s functionality.

- A critical bug with high severity and low urgency may not necessarily require immediate fixing, despite its significant impact, if there are no immediate deadlines or customer requests.

- A bug with low severity and low urgency can be assigned the lowest priority and possibly addressed later.

User Impact: This assesses how many users are affected by a bug and the extent to which their experience is compromised. A bug that impacts many users or disrupts their primary tasks takes higher priority.

Business Impact: This criterion considers the financial implications of a bug on the company. A bug leading to revenue loss or legal consequences is prioritized higher.

Reproducibility: The ease or difficulty of reproducing a bug can influence prioritization.

Dependencies: If fixing a bug requires addressing other bugs or depends on external factors, this should be considered in prioritization.

Historical Data: Analyzing historical data can help identify patterns in recurring bugs, prioritizing them for long-term improvements.

Customer Feedback: Actively reported or complained-about bugs from customers should be strongly considered for prioritization. However, repeated occurrence in subsequent versions may escalate customer dissatisfaction.

Regulatory Requirements: Bugs violating laws or industry standards should be prioritized higher.

Risk Acceptance: In some cases, a company may choose to accept a lower-priority bug if fixing it requires significant effort and the risk is low.

The exact criteria and weightings may vary based on the company, product, and project context. It’s essential to establish clear prioritization guidelines, ensuring the entire team understands and adheres to these criteria. This enables consistent and effective bug prioritization.

As the end of the testing phase approaches, the number of bugs found or fixed should decrease. If the bug count doesn’t decrease by the release, post-release causes need examination (e.g., in retrospectives) and should be addressed or avoided in future releases.

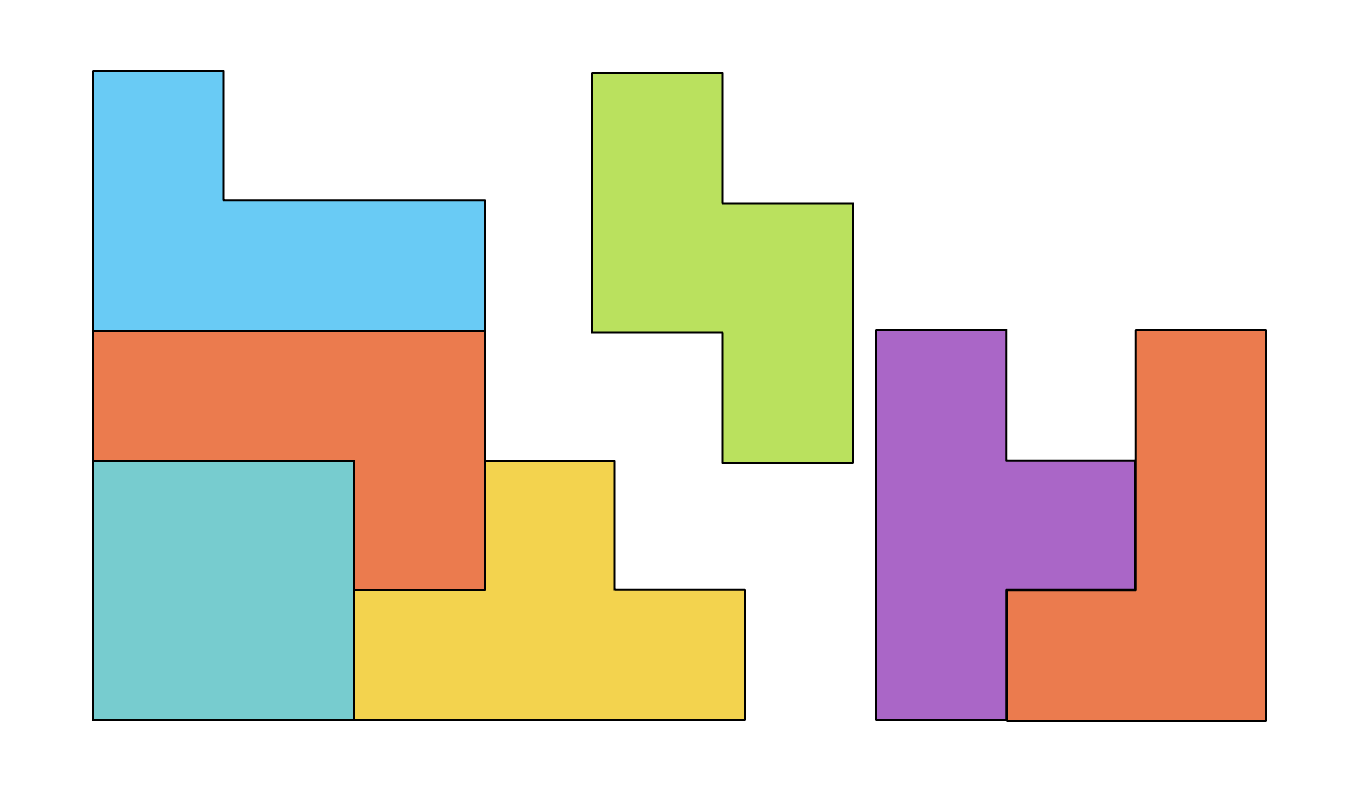

Test Case Tetris: Efficient Management of Growing Test Cases

“Tetris” is a popular video game where blocks in various shapes fall, and the player must arrange them to form a continuous horizontal line. Once a horizontal line is complete, it disappears, and the player earns points. However, as the Tetris blocks relentlessly stack up, it becomes increasingly challenging to clear the situation, and the player eventually loses the game. In software quality assurance, a similar situation arises with test cases. As time progresses and the complexity of the software grows, the number of required test cases also increases.

However, this leads to increased maintenance efforts and longer test execution times, significantly impacting the efficiency of your QA activities.

Scaling efforts pose a serious challenge and can eventually cripple your QA team. It is important to take early measures to address and counteract this problem. Here are some helpful approaches to tackle this dilemma:

Compactification of Test Cases: Critically review your existing test cases and attempt to optimize them. Reduce unnecessary steps and focus on the essentials. Test cases should be compact and effective to avoid redundancies and unnecessary complexity.

Implicit and Explicit Testing: Avoid duplicate testing efforts. Actions or processes already covered in other test cases, such as in preparation, do not need to be explicitly tested again.

Focus on Essentials: Identify the main purpose of each test case and reduce it to the minimum needed to fulfill that purpose. Precisely identifying the test objective allows you to streamline test cases without compromising quality.

Joint Test Execution: Test cases with similar or identical preconditions can be executed together, where possible, to minimize test preparation efforts.

Monitoring Test Execution Times: Keep an eye on the time required for test execution. Compare actual execution time with planned time to identify deviations. For automated tests, compare “Realtime” and “Executiontime” to detect time losses.

Reduction of Wait Times: Minimize wait times (Sleeptime) in automated tests to the necessary minimum. For instance, you can use an Application Busy Detector waiting for specific conditions, such as the disappearance of loading indicators, before proceeding to the next step.

Root Cause Analysis for Increasing Execution Times: If the execution times of a test suite increase, identify and address the causes early to preserve efficiency. Revise and optimize your (automated) tests to reduce execution times and enhance maintainability.

Refactorings: When test cases or test environments become outdated or unwieldy, conduct refactorings to minimize maintenance efforts and enable extensibility.

A thoughtful strategy for handling the growing number of test cases is indispensable to ensure that your QA activities remain efficient and cost-effective, even in complex, long-running projects.

Prioritizing Test Cases

Prioritizing test cases is akin to prioritizing bugs, and it should be based on their impact on end-users’ workflows. This step enables your QA team to focus on the most critical aspects of your application, minimizing risks even under unfavorable circumstances—strategic superiority.

Test case prioritization typically occurs in various tiers:

Blocker: These are test cases essential for the basic operation of your application, such as the login process or permission management.

Critical: Critical test cases involve functions that, while not blocking the entire workflow, are still of high importance. Examples include payment methods or data transfers.

Major: Relevant but not immediately business-critical, these test cases cover aspects of considerable significance for the overall functionality and user experience of your software product. Examples include password recovery and search functionality.

Normal: Test cases in this category address general functionalities that complete the application but have no immediate impact on the end-user’s workflow. For instance, test cases for filter and sorting options.

Minor: Test cases of this nature relate to aspects of minor relevance for the normal operation of the application, such as the position of a button or the exact wording of an error message.

When time is limited, prioritize executing blocker and critical test cases first to ensure essential functions work flawlessly. If time permits, then move on to major and normal test cases. In this context, it is highly important to conduct all key checks in all areas when time is scarce.

Test case prioritization is a strategic decision that helps ensure the quality of your application while remaining flexible in response to varying time and resource constraints. This targeted approach allows your QA team to keep the essentials in focus.

Sampling Approach

When time is insufficient for comprehensive testing, and tests share the same priority, it is advisable to conduct testing randomly or through sampling.

Old and Unchanged

In contrast to unchanged areas in your application, new or modified functionalities take precedence. It’s crucial to ensure that the implemented requirements meet the expectations of the customer or end user.

Differing from developers, you test from the end user’s perspective. Do not solely rely on developer tests. Firstly, these tests take place in isolated environments, not on the end product. Secondly, they may secure inadequate or misunderstood requirements.

New or Modified Features

In contrast to unchanged areas in your application, new or modified functionalities take precedence. It’s crucial to ensure that the implemented requirements meet the expectations of the customer or end user.

Differing from developers, you test from the end user’s perspective. Do not solely rely on developer tests. Firstly, these tests take place in isolated environments, not on the end product. Secondly, they may secure inadequate or misunderstood requirements.

The Happy Path

The maximum quality of a software product is not only about being bug-free or meeting negative test cases but ensuring that the software operates optimally and reliably in the central functions expected by the end user.

The “Happy Path” in quality assurance describes primary functionalities that an end user should execute flawlessly. Happy Path scenarios are typically prioritized higher than negative test cases, as they form the basis for the fundamental functionality of the software. Therefore, before delving into negative tests and exceptional situations, it is important to ensure that regular workflows function seamlessly.

By prioritizing the most likely and commonly occurring user scenarios, quality is maximized in the key areas of your application. This results in an enhanced user experience, helping your software better meet the requirements and expectations of end users, ultimately gaining their trust. That is strategic superiority.

Where There’s Smoke, There’s usually fire

If you discover bugs in a specific area of your application, it makes sense to dig deeper. When the number of bugs in a particular area is high, quality assurance in that area typically deserves higher priority.

Efficient Test Case Selection

Test case selection for test runs and test reruns is a critical step in software quality assurance. It allows maximizing the efficiency of test execution while ensuring software quality. This process involves the careful selection of test cases to be executed in a specific test run or test rerun. By carefully choosing the minimum required test cases, you can test features and bugs with the right balance between efficiency and quality.

Here are some steps that can help you in selecting the right test cases:

Identification of Goals: Clearly define the goal of the test run or test rerun. This could include verifying new features, validating bug fixes, or assessing the overall performance of the application.

Prioritization: Prioritize test cases based on their importance. Critical functions or those affecting key business processes should be given priority. Prioritization is often based on risk analysis and business requirements.

Identify Dependencies: Understand the dependencies between different features and modules of your application. This helps in selecting test cases that cover critical interactions.

Utilize Historical Data: Previous test results and bug reports can provide useful insights into which test cases should be re-executed. This is particularly helpful to ensure that previously identified issues have been resolved.

Application Changes: If there have been changes to the application since the last test run, select test cases that cover these changes. This is crucial to ensure that new features or bug fixes work correctly.

Time Management: Select test cases in a way that the test execution can be completed within an acceptable time frame. This requires considering resource constraints and schedules.

Test Coverage: Ensure that the selected test cases provide adequate coverage of the application. This includes testing basic functionalities, edge cases, and different application areas.

Test Matrix: Create a test matrix to document the selected test cases and their coverage. This helps in keeping track and ensuring that all important aspects are covered.

Automation: Automated test cases are often faster and more consistent in execution. Therefore, select appropriate test cases for automation to increase efficiency.

Documentation and Tracking: Clearly document the selected test cases and track their execution. This enables later traceability of results and targeted reporting.

Adaptability: Test case selection is not set in stone. Be ready to adapt your strategy if requirements or conditions change. This allows you to be flexible in responding to new information or developments.

The selection of test cases is an iterative process that requires careful planning, analysis, and consideration. By choosing test cases carefully and focusing on those likely to provide valuable insights, you can use time and resources more efficiently while ensuring high test quality.

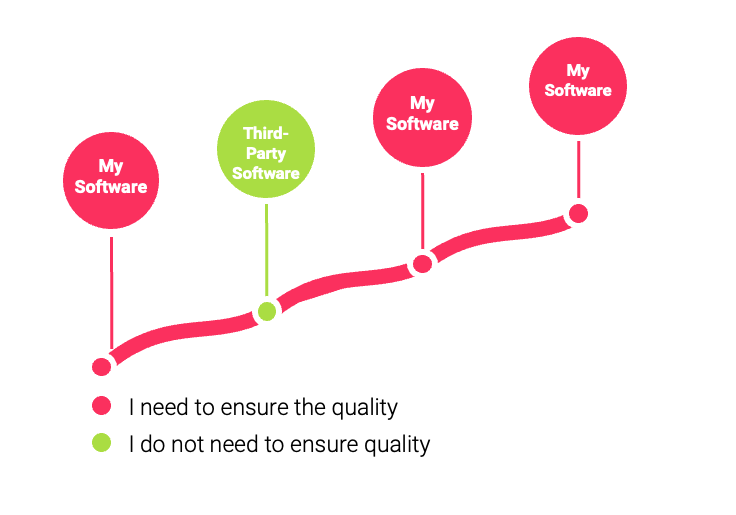

Third-Party Software

When incorporating components of third-party software into your application, it is essential to test both your own software and the integration of the third-party software. Typically, testing the third-party software itself is not your responsibility.

Backdoor for Quality Assurance

At times, creating elaborate prerequisites for initiating tests can be challenging. If test preparations are not feasible or are difficult to implement, it can be critical for quality assurance. Therefore, it’s essential to identify these risks before testing phases and take appropriate measures in a timely manner:

- Automating the provisioning of test data, certificates, entities to be migrated in the next release, etc.

- Freezing necessary prerequisites, such as creating dumps, templates, etc.

- Simplifying a highly elaborate preparation process by providing a backdoor visible only to quality assurance.

This approach can save valuable efforts and, at the same time, minimize the risk of a quality assurance blocker.

Is There a More Efficient Alternative?

Before embarking on an inevitable path that comes with expensive efforts, take the time to explore alternative solutions or shortcuts. Always keep your quality assurance efforts in mind. Look for potential solutions, such as automation, to eliminate or at least reduce above-average, scalable, and recurring efforts in quality assurance.

Summary

Strategic superiority in software quality assurance requires a smart strategy focused on prioritization and efficiency. By strategically reducing tests, selecting test environments wisely, and focusing on essentials, you can enhance software quality while optimizing time and resources. This is the key to achieving maximum quality with minimal effort.

In Part 3, you’ll learn how an efficient quality assurance strategy begins right from the test case. (Coming online soon)

The entire series at a glance:

Part 1: Mastering Quality Assurance in Enterprise Software: Maximizing Quality with Minimal Effort

Part 2: Enterprise Software Quality Assurance: Strategic Superiority

Part 3: Enterprise Software Quality Assurance: Quality Begins with the Test Case

Part 4: Enterprise Software Quality Assurance: Skills and Competencies